My experience switching BetaList from Heroku to Render

I started BetaList as a Tumblr site back in 2010 to give startup founders and early adopters a place to discover each other and share feedback. Since then it has evolved a lot. Both functionally and technically.

It was a Sinatra app for a while, and later I switched to Ruby on Rails 3. As the years went on I kept the codebase up-to-date by upgrading anytime a major new Rails version came out. (Running on Ruby on Rails 7 now with the Hotwire goodness.)

I played with the idea of moving away from Heroku for a while. For a few reasons. The most concrete reason being costs, but I also felt like their product development stalled the last few years and although I’ve had some good experiences with their support team, overall the company seemed more interested in serving huge enterprise clients rather than a startup like my own.

At one point one of their sales reps called me (still not sure how they got my phone number) and repeatedly emailed me trying to upsell me on a $1,400/mo Enterprise plan with a 12-month commitment. I guess someone needed to hit their quota 😅

Render

Because of these reasons I’ve been exploring alternatives. I briefly tried self-hosting with one app, but when the server randomly went down one night because my logfiles filled up the disk (apparently they don’t automatically get deleted?), I realized I don’t want to play devops engineer.

I ultimately settled on Render. They provide a similar “Devops done for you” product as Heroku at a more reasonable price point, the product is actively developed, and the team seems more in touch with their customers. Not just the enterprise ones.

Over the past year, I’ve been migrating all of my apps from Heroku to Render. First some smaller apps, and eventually some of my bigger sites like Startup Jobs. Since many makers are curious about switching to Render, I figured it would be valuable to document one of those migrations for others to benefit from.

I recently switched BetaList to Render as well and documented my approach below. I’ll also share some of the unexpected issues I ran into, and explain how I solved them.

Disclosure — Render was a BetaList sponsor at the time I wrote this post.

Previous Heroku stack

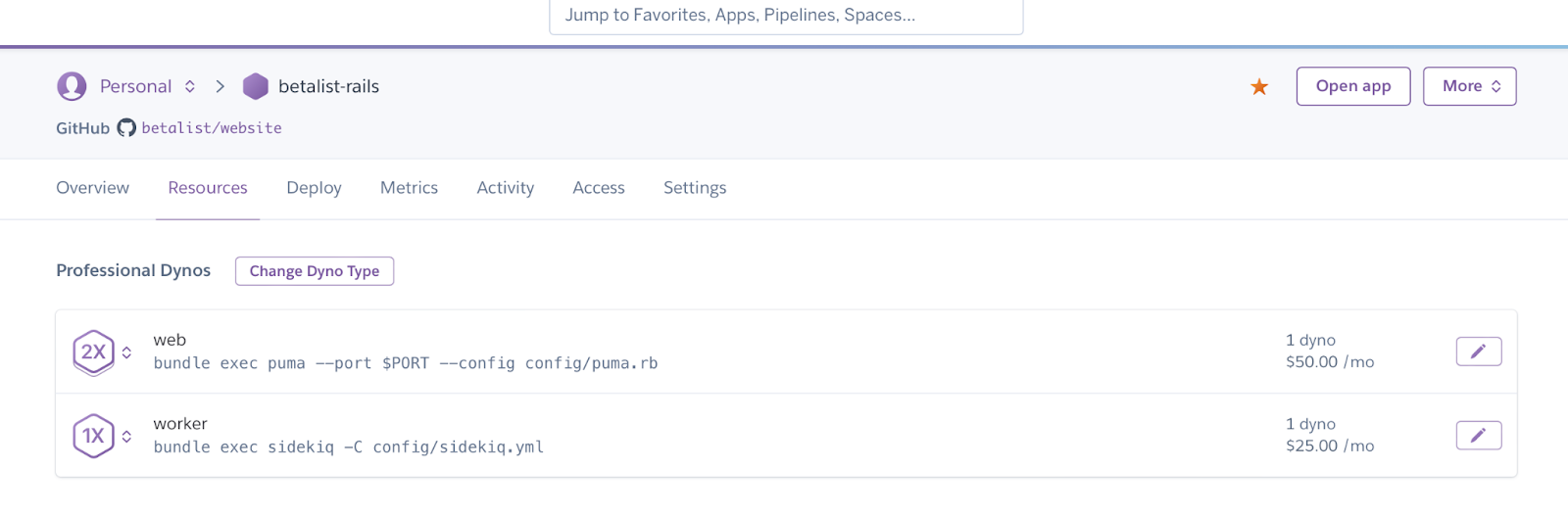

- Rails 7.0.4 / Ruby 3.1.2

- Web dyno ($50/mo)

- Worker dyno for Sidekiq background jobs ($25/mo)

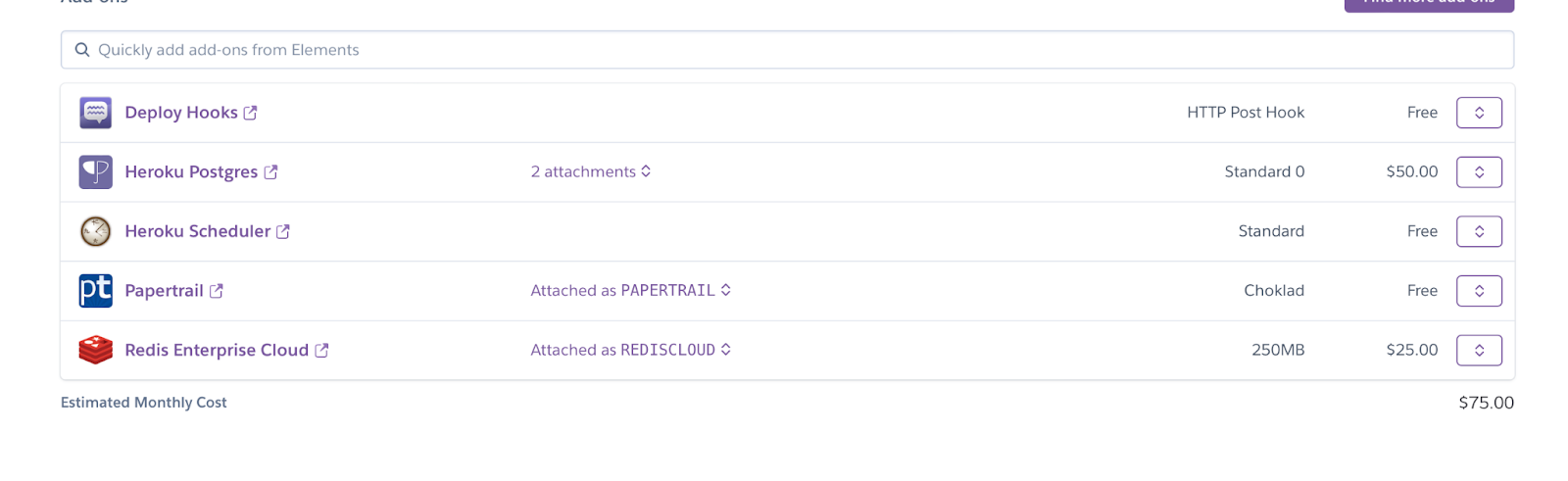

- Postgres ($50/mo)

- Redis (for caching and Sidekiq background jobs) ($25/mo)

- Scheduler ($5/mo)

- Adapt Scale for scaling up/down web workers ($18/mo)

On average I was paying around $175/mo at Heroku for BetaList. Not horrible, but if you run multiple sites like I do this will start to add up.

The migration process

Here’s roughly how I approached the switch:

-

Deploy a few smaller apps on Render.

-

Prepare the BetaList codebase.

-

Deploy a test version to Render.

-

Import (sanitized) production data.

-

Migrate for real.

1. Migrate a few smaller apps

Before migrating, I got familiar with Render and render.yaml. Rather than configuring everything through the dashboard (which is still possible as well), you can write a document as part of your codebase that defines which services to use, etc. It’s one of my favorite features.

You spend a lot of time configuring your servers, etc. so it’s nice to have a concrete copy of all that work. It makes it easier to experiment as well as you can always revert. Plus, you can easily copy your server setup between projects.

2. Preparing the codebase

Good preparation can save a bunch of work. I started by moving all of my API keys and other sensitive data from environment variables to the more portable Rails Credentials. I also backed up my config variables with this just to be safe. (Don’t commit this file to your git repository).

Here's where I try to convince you to subscribe to my newsletter so I become less dependent on Twitter and other media when publishing new blog posts or launching new projects.

I will email a few times per year. You can opt out anytime.